Understanding understanding – could an A.I. cook meth?

What would it take to say that an artificial system “understands” something? What do we mean when we say humans understand something? I asked those questions on Twitter recently and it prompted some very interesting debate, which I will try to summarise and expand on here.

Several people complained that the

questions were unanswerable until I had defined “understanding”, but that was exactly

the problem – I didn’t have a good understanding of what understanding means.

That’s what I was trying to unpick.

I know, of course, that there is a rich

philosophical literature on this question, but the bits of it I’ve read were

not quite getting at what I was after. I was trying to get to a

cognitive or computational framework defining the parameters that constitute

understanding in a human, such that we could operationalise it to the point

that we could implement it in an artificial intelligence.

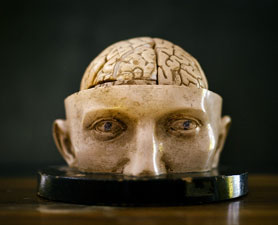

So, rather than starting with a definition,

let me start with an illustration and see if we can use it to tease out the

parameters that characterise understanding, especially the difference between

understanding something and just knowing something or being able to do

something.

I

know it when I see it

Here goes (and if you haven’t watched

Breaking Bad, I can only apologise for my choice of example):

Jesse Pinkman knows how to cook crystal

meth. But Walter White understands

the process. Jesse can follow the steps of the protocol. He knows that he

should do step 1, then step 2, then step 3 – he can carry out the algorithm.

Walter knows why they do step 1, then

step 2, then step 3. Whatever constitutes that understanding, it is easily

recognisable to us because he invented the protocol, because he can teach it to

someone else, and because he can modify it as needed to scale it up or if

various ingredients become hard to obtain.

So, what’s the difference? What parameters

differ between Jesse’s brain and Walt’s brain when it comes to their knowledge

of cooking meth? Why does Walt’s state constitute understanding, while Jesse’s

does not?

Jesse’s knowledge is specific, isolated,

fragmentary. He understands that when

you add substance A to substance B you produce substance C. Walt’s knowledge,

by contrast is situated in a much wider and deeper context. He understands how substance A reacts with substance B

to produce substance C. That’s because he knows the properties of these

substances that drive their reactivity. So, he has a level of knowledge that is

more fundamental and more general, to which he can relate more specific facts.

And, beyond that, Walt understands why those substances have those

properties. He has even more fundamental levels of knowledge about types of

substances – not just those particular ones – and about their physical

structures at a deeper level that produce their chemical properties. That’s why

he could come up with the protocol in the first place and that’s why he can

change it as circumstances demand.

So, we have a hierarchy of understanding – that, how, why. At each level

we are situating some facts in the context of a wider body of knowledge, but

also, crucially, a deeper level of

knowledge. But not one that is reductionist – that is bogged down in the

details of a lower ontological level. Instead, it is one that sees the

important principles at that lower level and how they determine the properties

at the higher level.

The simple accumulation of knowledge – the

addition of extra facts – does not confer deeper understanding. What is

required is the abstraction of general principles from all that knowledge, so

that new facts can be situated within a logical, coherent framework – one that

crucially entails causal relationships.

Responses

from the hive-mind

This ties in to a lot of the responses I

got to my questions on Twitter. These mostly converged onto ideas of

abstraction – of categories, principles, causal relationships – and abduction,

or abductive reasoning – forming a hypothesis from a set of observations, or

thinking about why some things were

observed or some facts hold true. It’s basically guessing, but, here, in a way

that is informed by wider knowledge and experience.

But there were a lot of other parameters

suggested, and I have organised those below in a kind of ascending order. They

start with those needed for a very simple kind of understanding, which might be

programmed into an artificial intelligence. (Indeed, A.I. is exceptionally good at some of them).

And they ascend to properties that might be

thought of as necessary for a higher kind of understanding, which may

ultimately depend on internal models and representations, consciousness,

awareness, and our own histories of embodied experience. And, who knows, maybe

those properties could be built into an artificial intelligence too and maybe they

are exactly the type of properties that would be required for what some people

might consider “real” understanding, as opposed to a convincing simulation of

it.

The elements

of understanding

1.

Categorisation – building a

kind of internal database of types of

things, with knowledge of the properties that define each type. These

necessarily have a hierarchical nature. So, Rover is a golden retriever, which

is a subtype of dog. Dogs are characterised by A, B, and C – these collective

properties form the schema or concept of “dog”, while properties D, E, and F

characterise the subtype of golden retrievers. And, of course, dogs are

themselves subtypes of mammals, which are subtypes of animals, and there are

schemas for those levels as well.

2.

Generalisation – categories

allow you to generalise. When you see a Corgi for the first time you may still

be able to recognise it as a type of dog and make some predictions about its

behaviour, because you understand some general things about dogs.

3.

Abstraction – the activity that

enables you to build categories. You have to be able to see which are the

properties that are essential to define a category at each level, and which are

incidental.

4.

Compression – a related way to

look at abstraction. How much detailed information can you throw away when

moving from one level to the next, while retaining the important properties? Being

able to see the forest for the trees depends on extracting trends or patterns,

while ignoring a lot of the details, much of which may be noise.

5.

Pattern recognition – again,

this is related to abstraction and compression. The ability to detect

statistical regularities in a set of observations, but hopefully without over-fitting noise.

6.

Abduction – drawing hypotheses

about the state of a system. Now we’re starting to go beyond just the

properties of objects, to consider the properties of the relations between objects.

7.

Causal reasoning – this is

where we really get into the meat of it, in my opinion, as we begin to

understand systems, not just things or types of things. If I understand a

system, I should be aware of the causal relations between its elements and the

causal dependencies of system behaviour on those relations. I should have an

understanding of causality both within levels and between levels.

8.

Counterfactual reasoning – one

way to demonstrate such understanding is to be able to consider counterfactuals

and their likely consequences. If such and such were NOT the case, how would

that affect the operation of the system? To perform such reasoning, you have to

know which details are important for the causal dynamics of the system.

9.

Prediction – this is an

extension of counterfactual reasoning. If you really understand a system you

should be able to predict what would happen if you changed something about it,

or predict how it would behave in some new scenario.

10. Manipulation – if we can manipulate and control a system with

predictable outcomes, many would say that demonstrates understanding. It

certainly demands knowledge of the causal relations at the level of operation

one is aiming to control, though it is possible to achieve without a deeper

understanding of the underlying principles at play.

11. Invention – this is an even deeper level of understanding. It

requires not just knowing the causal relations in a given system but understanding

the more abstract principles underlying those relations. Those then become

elements that can be reconfigured into new arrangements to carry out novel

functions or operations.

12. Analogy – again, this is a question of abstraction of deeper

principles. First, seeing that some particular causal relation between two

things is a relation between two types

of things. Then seeing that that particular relation is itself a type of relation with more general

properties. This allows you to go beyond knowledge or understanding of a

particular system to understanding of systems in general.

13. Mathematical description – some would argue you haven’t really

understood a system until you can express it in mathematical terms, the most

abstract level of reasoning. I think that goes too far – most of our

understanding is intuitive or logical, without involving formal mathematics.

However, mathematical expression can reveal deep correspondences that might not

otherwise be apparent, such as the correspondence between Shannon information

and thermodynamic entropy, or between predictive inference in perception and

Bayesian statistical reasoning.

14. Awareness? – Do you have to know

you understand something to understand it? I don’t see any reason why you

would, but, again, some people might have this as a criterion.

15. Articulability? – Being able to explain something to someone else or

teach them how to do something can certainly be a good test of understanding,

but I don’t know if it is a necessary criterion – maybe just an additional way

we recognise it.

16. Embodied phenomenological experience? – Do you have to have lived

experience of something to truly understand it? This feels very vague, but

hints at the idea that one thing that may limit artificial intelligence is that

its knowledge is not gained by active exploration in the world. Perhaps

disembodied knowledge will never reach a human level of understanding that

comes with being embodied agents in the world. Like I said, it’s vague but

links to the question of how information comes to have meaning, and whether

meaning is required for true understanding.

17. Perspective? – does understanding necessarily entail some subjective perspective?

This is really just the context of the life history of the organism or agent

that is doing the understanding. Clearly it can be a barrier to shared understanding,

when there are implicit assumptions of wider context, prior positions, or

values that are not themselves shared.

I am sure there are other criteria or

properties that could be considered, but I think those capture most of the

responses I got on Twitter. The key thing, to my mind, seems to be embedding

knowledge at one level in the context of knowledge of another level, in

particular knowledge of the causal relations of a system.

Now, the question is whether that

discussion gets us any closer to knowing what we’d need to build into an A.I.

to make it capable of real understanding.

Artificial

intelligence

Some people would argue that A.I. is

already capable of some kind of understanding. For example, DeepMind’s

incredibly impressive engine AlphaZero knows how to play chess. But does it

understand it? It certainly seems to, if you measure it by its success, and

also by the kinds of moves it makes. Indeed, some people argued that its style

of play – the “beauty” of some of its moves and strategies – shows more

understanding than any human has ever demonstrated.

On the other hand, its knowledge is all at

one level. It doesn’t know chess is a game – it doesn’t know what a game is. It

doesn’t know it’s a metaphor for war. It doesn’t relate its knowledge of chess

to its knowledge of anything else, because it doesn’t know about anything else.

Maybe all it would need to develop this

understanding is to be exposed to lots more information. It clearly has the

computational power to recognise patterns in massive amounts of data and to

make predictions from massive amounts of prior experience. But is that how

humans develop understanding? Or do they do something different? Do they

actively extract rules and principles with less data? Are they wired to make

abstractions and draw general inferences? Is our neural architecture

particularly attuned to causal structure?

Perhaps it is the hierarchical architecture

of the cerebral cortex that fosters the development of understanding, with each

level being able to abstract more general principles by integrating across multiple

units at the level below. This gives more opportunity to see emergent causal

relations, to draw more distant analogies, to derive deeper principles.

Indeed, the expansion of the human cortex

is characterised not by the expansion of individual areas, but by the addition of more areas, particularly of association cortex – the bits that integrate

information from lower levels. We don’t just have more raw brainpower –

evolution has extended the hierarchy of our neural architecture to higher and

higher levels.

Of course, A.I.’s like AlphaZero have a

hierarchical structure, with multiple layers, but it is all devoted to one

thing. Maybe if we hooked a bunch of them up in parallel, each doing different

things (say, playing different games), and then added another layer on top,

that layer could extract more general principles (like aspects of game theory

in general).

I don’t know whether the preceding

discussion is really any use in thinking more precisely about how to

operationalize and implement understanding in artificial systems, but maybe

some people from that field will chime in. (This excellent blog on the interactions between the A.I. field and neuroscience is relevant).

Understanding

in neuroscience

The discussion above also resonates with

another question I asked recently on Twitter. I had been browsing the articles

in the latest issue of Neuron, which span all levels of neuroscience, from the

molecular up to human cognition, and wondered what would it take for a single

person to really understand all of them?

This is one of the flagship journals of our

field, yet most neuroscientists, myself included, can only really understand a

small slice of the papers in each issue. This reflects the history of the

field, which is, in fact, a loose agglomeration of many traditionally distinct

disciplines – molecular and cellular neuroscience, developmental neurobiology,

genetics, animal behaviour, electrophysiology, pharmacology, neuroanatomy,

circuit and systems neuroscience, cognitive science, computational

neuroscience, neuroimaging, psychology, psychiatry, neurology…

The reason the discussion of understanding

is particularly relevant to neuroscience is that this field is by its nature hierarchical.

These different disciplinary approaches are not defined solely by their methods

but by their objects and levels of analysis, from single cells, to

microcircuits, to extended systems and brain regions, to the ultimate emergent

level of mind and behaviour.

The key challenge for any neuroscientist is

to see across these levels. To understand how the dynamics of molecules within

a cell determine its electrophysiological properties, in ways that determine

its role in information processing within a microcircuit, which is itself a

subcomponent of a larger circuit, the activity of which has some mental correlates, and so on. This is a challenge that one would hope could be met by new

generations of students who do not have the baggage of having been educated in one of the historical

silos.

But it is very easy to get bogged down in

the details at each level. What is important is to abstract the general

principles at play, which are required to understand the emergent functions of

the next level up.

In fact, I think that’s not just the

approach that we need to take to understand the nervous system as scientists. I

think it’s the approach that the nervous system itself takes. From neuron to

neuron, from region to region, from level to level, information is abstracted

from all the noisy details, and this information has meaning that is understood in the context of

lots of other information.

Perhaps it’s anthropomorphic to say that

each neuron is trying to understand the neurons talking to it or that each

level is trying to understand the one below it. But, then again, perhaps not –

perhaps there is a deep mathematical correspondence between understanding at

the psychological level and even the simplest elements of information

processing at the neural level.

With thanks to all those who engaged on Twitter - you're the reason it's such a great platform for scientific and philosophical discussions!

Comments

Post a Comment