Go big or stay home! Small neuroimaging association studies just generate noise.

Figuring out the neural basis of differences between individuals or groups in all kinds of psychological traits or psychiatric conditions is a major goal of modern neuroscience. In humans, investigating this has necessarily relied on non-invasive tools like functional or structural magnetic resonance imaging (MRI). Many thousands of studies have been published following a similar design: measure some functional or structural neuroimaging parameters across the whole brain and compare them across individuals or groups to look for ones that are statistically associated with variation in some psychological trait, performance on a cognitive task, or membership of one or other group. Most of these studies have sample sizes in the tens or at best the low hundreds. A new study by Scott Marek and colleagues shows convincingly that those sample sizes are at least one or two orders of magnitude too low to produce reliable results.

This shouldn’t really be news to anyone who’s been paying attention either to the field of neuroimaging or to wider developments around the reproducibility and robustness of scientific research. First, let me make clear that there are lots of neuroimaging studies that don’t suffer from this problem. These include, for example, studies investigating brain activation patterns when people are performing various kinds of tasks. These don’t need huge samples of people because they use tightly controlled experimental set-ups and usually run hundreds of trials in each individual.

It is the exploratory studies looking for brain correlates of individual or group differences in some phenotype that are susceptible to the problems that come with low statistical power and the aligned issues of excess researcher degrees of freedom and publication bias. The existence of these problems is perfectly evident just from surveying the published literature. For example, there are many hundreds of papers published claiming to have found a neuroimaging “biomarker” that is associated with any one of numerous psychiatric conditions (with depression, schizophrenia, and autism leading the list). None of these have held up. Similarly, there is no shortage of reported associations of brain imaging parameters with various personality traits of one kind or another – again, none of these appears to be robust or reproducible. The empirical observation therefore is that this literature is not producing real findings.

The reasons why are now painfully clear. I say painful because the field of genetics has been through this process already, more than a decade ago. The problem arises in performing exploratory studies looking for association with any one of thousands of parameters in samples of only dozens of individuals. It’s clearly a recipe for finding associations that are merely statistical blips. If you add in a high degree of flexibility in the way the data are analysed and filter the results with a hefty dose of publication bias (which selects for “positive” findings), what you get is a literature that is hopelessly polluted by spurious results.

This accurately characterises the literature of “candidate gene association studies”, with hundreds of papers claiming association between some genetic variants and some phenotype, based on very small sample sizes. By the mid-2000’s, the field had started to recognise that these reported associations from small studies were not robust. Very large consortia were formed to pool samples, especially of patients with various genetic conditions, and new technologies emerged that enabled genome-wide association studies (GWAS) to be carried out. These were much more rigorously statistically conducted, correcting for the hundreds of thousands of parameters being tested, including essential replication samples from the get-go, and reporting all results, statistically significant and not. GWAS have been extremely successful in identifying many thousands of common genetic variants associated with all kinds of traits and conditions. They also clearly demonstrated that the previously reported candidate gene associations were spurious.

By analogy, Marek and colleagues look at BWAS – brain-wide association studies – which compare thousands of brain imaging parameters across individuals or groups to look for any that are associated with a given phenotype. By pooling thousands of samples from multiple datasets, and running exhaustive simulations of different study designs, they show convincingly that sample sizes of thousands are needed to detect realistic effect sizes. In particular, they looked at two kinds of imaging parameters – one functional: resting-state functional connectivity (RSFC), and one structural: cortical thickness – and assessed whether these measures across the brain are associated with general cognitive ability or a general measure of psychopathology.

The results are sobering. The median effect size of associations detected (the correlation value, r) was 0.01. That means that the correlation between the brain imaging parameter and the phenotype only “explained” 1% of the variance in the phenotype. They go on to show that:

“The top 1% largest of all possible brain-wide associations (around 11 million total associations) reached a |r| value greater than 0.06. … Across all univariate brain-wide associations, the largest correlation that replicated out-of-sample was |r| = 0.16.” However, they note that: “Sociodemographic covariate adjustment resulted in decreased effect sizes, especially for the strongest associations (top 1% Δr = −0.014).”

So, with samples in the thousands, these studies detected associations with very small effect sizes. The corollary is that if the real effects are so small, samples in the thousands should be needed to detect them. This means that previously published associations with samples in the tens (on average, n=25) are VERY likely to be false positives. The only “results” that could be statistically distinguished with such small samples are ones with unrealistically large effect sizes. In fact, they state that: “At n = 25, two independent population subsamples can reach the opposite conclusion about the same brain–behaviour association, solely owing to sampling variability.”

The implications are stark (and will sound harsh, but now that we know what we know there's no point pretending it's not true):

First, the published literature of such studies must be treated with a huge degree of suspicion, or ignored altogether. This won’t be easy. No one is suggesting that all those papers be retracted. This means they will sit there in the literature and likely will continue to be cited by the unwary, as candidate gene association studies often still are.

Second, researchers should stop doing these small studies. Funding agencies should stop funding them. Reviewers should stop endorsing them. Journal editors should stop agreeing to publish them. This will be, to put it mildly, an enormous culture shock. It was in genetics and it required major sociological changes on the part of all stakeholders to move past the flawed methodologies to perform this research on the scale required to produce reliable results.

But what will small labs do that don’t have the resources for such huge studies? What will students do if they can’t run experiments on practically sized samples that can be recruited over the course of a PhD? I don’t know but I would suggest something else. There’s really no sound argument (scientific, sociological, or financial) for continuing with these kinds of studies when the evidence is so clear that they don’t yield trustworthy findings. They simply waste everyone’s time and effort and resources and pollute the literature with noise.

One solution is to form the kinds of consortia seen in genetics and indeed already in operation for brain imaging. This will no doubt be required to collect samples of sufficient size to detect these very small associations with brain imaging parameters.

There is, however, a deeper question that I think should be asked. Why do we think such associations should exist? For GWAS, the rationale was extremely clear – the traits and conditions under investigation had been shown to be partly heritable. That is, some non-trivial proportion of the variance in these phenotypes is attributable to genetic variation across the population. This means that there must exist genetic variants in the population that affect these phenotypes. GWAS is hunting known quarry.

The same cannot be said for brain imaging parameters. We simply don’t know that the kinds of traits that people look for associations with are indeed associated with differences in brain structure or function that can be detected at the (really pretty crude) level of MRI. Clearly, we can’t trust the massive literature of small studies that have suggested this. But what other evidence is there in support of that hypothesis? We should expect something to be different in the brains of people with autism or schizophrenia or depression versus people without and similarly there must be some neural differences between people who are more or less neurotic or intelligent or aggressive.

But should we expect those differences to manifest at the macroscale of neuroimaging – at the level of the size of different brain regions or the thickness of bits of the cortex or the structural or functional connectivity between various structures? I mean, maybe they would, but there doesn’t seem to be a very good reason to expect that, as opposed to expecting much more distributed and microscale differences.

Any such differences (macro- or microscale) might also be quite idiosyncratic, given the clinical diversity of diagnostic categories and high-level nature of psychological constructs. There are many ways to end up with a diagnosis of autism or schizophrenia and many different profiles of distinct psychological facets that would manifest as high extraversion or neuroticism. Add the very high background level of individual differences in brain imaging parameters to begin with and you have to question the confidence with which imaging association studies have been pursued. There’s certainly no “ground truth” of heritability that provides a solid motivation for these kinds of studies.

Indeed, it is notable that the phenotypes examined in the study by Marek are extremely non-specific: general cognitive ability and general psychopathology. If any phenotypes are going to show consistent associations across thousands of people (non-specifically across the whole brain), it should be these. [One technical question is whether the imaging results were adjusted for total brain size, which has a known, substantial correlation with general cognitive ability].

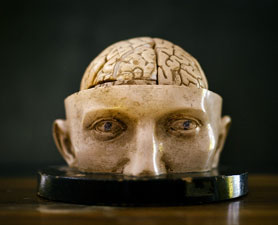

But should the success in detecting very

weak and non-specific associations with these very general phenotypes imply

that similarly massive samples could find associations between more specific

kinds of phenotypes and more specific brain regions? That doesn’t seem to

follow, necessarily. Frankly, the premise harkens back to naïve ideas of

phrenology – that different brain functions could be reliably associated with

the size of different brain regions (as revealed by bumps in the overlying

skull). I'll put my hand up to say I've been involved in these kinds of studies myself in the past, but my expectations have changed over time.

One final question that it seems should be answerable by people proposing to do these kinds of BWAS at the scale that would be required (with the financial resources that would be required): what would be the point? Say we find that some psychological trait or psychiatric condition is statistically (really weakly) associated across thousands of people with a tiny difference in some brain imaging measure, like the thickness of some little bit of the cortex (or multiple bits). Then what? Would such a discovery (explaining a tiny fraction of the variance in the phenotype) really tell us much about the underlying neurobiology or cognitive operations? Would it even provide an entry point for future work? Maybe it would, but it seems incumbent on such researchers to explain how any positive (really weak) associations would be followed up.

The comparison with GWAS may be useful

again in this regard. I have questioned the feasibility of following up on

common genetic variants that have almost negligible individual effect sizes in

these studies. But at least there is a discrete and definite genetic difference

there that some people have and others don’t, that can be assessed in

biological assays at various levels (biochemical, cellular, physiological). The

same does not hold for brain imaging differences. These would not even be

“there” in individuals – they’re not something you have or you don’t – only an

upward or downward trend in some continuous parameter across many people. It’s

not obvious (to me at least) what you could do with that information. (But perhaps there are very good options I have not thought of).

So, if I were reviewing a grant proposing a BWAS, I would first want to make sure that it had a sample size in the thousands, well enough powered to detect a small effect size. But I’d also want to know why such an association should be expected in the first place. And, if any such association were found, how that information would really advance our understanding of the neural basis of the psychological functions or conditions in question.

Comments

Post a Comment